How does the mind work—and especially how does it learn? Teachers’ instructional decisions are based on a mix of theories learned in teacher education, trial and error, craft knowledge, and gut instinct. Such knowledge often serves us well, but is there anything sturdier to rely on?

Cognitive science is an interdisciplinary field of researchers from psychology, neuroscience, linguistics, philosophy, computer science, and anthropology who seek to understand the mind. In this regular American Educator column, we consider findings from this field that are strong and clear enough to merit classroom application.

QUESTION: I often hear that growing up with smartphones and other technology has “rewired” children’s brains—and I see that my students in recent years have a much harder time paying attention than they did in the past. What does this “rewiring” mean? Are children today less able to focus attention?

ANSWER: There’s been a great deal of research on this question in the last 10 years, and it appears that children’s ability to control their attention has not been compromised, or if it has, it’s a small effect and would not account for what educators feel they see in the classroom. But it’s also possible that the problem is not that students can’t pay attention, but rather that they often don’t want to. I’ll review some data here suggesting that digital entertainment has made children quicker to conclude that they are bored. There is also evidence that, compared to a generation ago, children are less willing to wait for fun—they want it immediately.

By the start of this school year, more than half of US states had enacted laws banning or regulating the use of cellphones by students in school, and most others are considering such measures.1 Legislators suspect that cellphones have contributed to the dramatic increase in mental health issues among American teenagers, and they are also responding to the common-sense observation that phones are a potent distractor making it hard for students to learn.2 A recent nationally representative survey showed that 72 percent of high school teachers call cellphone distraction “a major problem” in the classroom.3

Banning cellphones in school may remove the immediate source of distraction, but are educators facing a bigger problem here? Has the long-term use of digital technologies rendered many students unable to sustain their focus?

Some observers think so, most famously journalist Nicholas Carr, as described in his 2011 book, The Shallows.4 Here’s his two-part argument: First, the things we do on digital platforms often demand or encourage rapid shifts of attention. If you play an action video game, your eyes dart around the screen in search of bad guys. On social media apps, you skim through your feed, scanning for interesting content. And whatever you find yourself doing on a digital device, another app always beckons, so your attention seldom alights anywhere for extended engagement. The second part of the argument is that, contrary to earlier scientific dogma, researchers now have evidence that the brain changes with experience, even in adulthood.5 Considering both our habitual shifts of attention and the changeable brain, Carr surmises that you unintentionally train your brain to shift attention frequently, and eventually, you have no choice. You can’t sustain focus.

Many teachers report seeing student behavior consistent with that hypothesis. In a 2024 survey, when asked whether their students’ reading stamina had changed since 2019, 53 percent of third- through eighth-grade teachers said it had “decreased a lot.”6 Another 30 percent said it had “decreased a little.” College professors assert that they see the same problem. Although we do not have data, recent articles7 are filled with anecdotes about college students at elite universities having trouble sustaining attention to read book-length texts.*

Are teachers seeing evidence that Carr was right? Since 2010, a great deal of research has addressed the question of whether the use of digital devices lessens the ability to sustain attention, and as we will see, the evidence for that conclusion is weak. But that doesn’t mean these devices have no effect on the ways children (or adults) pay attention. For example, it’s possible that children still can pay attention, but they choose not to—perhaps because the immediate rewards of digital devices have rendered them less willing to sustain focus on challenging learning tasks. Or maybe students today experience boredom more often than children of the past because they unconsciously compare schoolwork to the enticing activities readily available on their cellphones. Let’s look at the evidence.

Digital Content Directly Affects Attention

How could researchers determine whether extended use of digital devices leaves people unable to focus? A straightforward test would compare the ability to focus among students who engage in a great deal of digital activity and those who seldom engage in it. Many researchers have taken that tack. They often test children from infancy to about age six separately from older children, reasoning that the young brain is more vulnerable to change.

And the results? For both older and younger children, the average of dozens of studies reveals a modest negative correlation: More screen time is weakly associated with less ability to control attention.8

Now, this kind of study has an obvious limitation—it finds a correlation, but correlation is not causation. Thus, although one is tempted to conclude that digital activities negatively impact attention, it’s also possible that children who have greater difficulty focusing their attention find digital activities more appealing than children who do not have such challenges.

Researchers have tried to address this problem by conducting longitudinal studies. That means they measure screen time and attention (at, say, age nine), and then measure them again in the same children months or years later. If more screen time at age nine predicts worse attentional control at age 11—even after accounting for the level of attentional control at age nine—that suggests screen time may contribute to later attention problems. Conversely, if worse attentional control at age nine predicts increased screen time at age 11, that indicates that attention difficulties may lead children to use screens more.

Using this method, most studies of younger (birth to pre-K) or older (K–12) children indicate that more screen time is probably causing poorer attentional control.9 The size of the observed relationship varies, but on average, it’s small.

Yet even with this improved research design, we cannot be confident that digital activities compromise attention. Other factors could be associated with “more digital activity,” and these other factors may diminish attentional capacity (so digital activity itself is a bystander, not the culprit). More time with digital devices doesn’t happen randomly; it tends to happen in certain contexts and with particular styles of parenting. Parents and guardians may allow their child more access to screens in an effort to improve their child’s mood or behavior.10 Or screen activities may keep the child occupied so the parent has time for their own pursuits.11 Wealthy parents may have easier access to pastimes for their child that are not screen-based. In each case, it may be elements of the context that have the critical effect on attention, not digital activities per se.

We encounter the same questions about causality when we try to interpret outcome differences that seem attributable to the quality of digital content. Several studies have reported that the impact of screen time on the development of attention in young children depends on what kids do during that screen time.12 These studies suggest that if parents choose educational content and interact with their child while they watch together, screen time doesn’t affect attention, but attention is compromised if parents let children watch noneducational programming on their own. But again, if we were to compare parents who did and did not have the time and inclination to select programming and watch it with their children, their households would probably differ in many ways, not just the nature of screen time. So even the modest effect we see via longitudinal studies could overestimate the impact of digital devices on attention.

Still another type of research compares the attention of kids today to that of kids who grew up with more limited access to digital devices. This procedure is actually a closer fit to the way we usually talk about the problem. When we say, “Kids today just seem unable to concentrate,” we’re comparing them to our memory of what kids were like 10 or 20 years ago.

Comparing kids today to kids 20 years ago can be done if the same test of attention has been in use for 20 (or more) years. That has happened because some mental measures have become standards, used for years and across many contexts. (Of course, if we see a difference in attention over time, we still can’t be sure what caused it. Lots of things have changed in the last 20 years.)

One study reviewed the results of 179 research reports published between 1990 and 2021; in each study, researchers had administered the d2 Test of Attention, a widely used assessment.13 In this paper-and-pencil task, the subject sees a sheet of figures and must cross out the targets—the letter d with two dashes over it. The nontargets are the letter d with one or three dashes or the letter p with one to four dashes. This task requires that subjects direct attention to specific visual features, inhibit responses to highly similar (but incorrect) items, and maintain focus on a long and repetitive task. Researchers found that children’s performance from 1990 until 2021, on average, did not change. Adults actually improved slightly.

Another study examined performance on two working-memory tasks.14 For one task, the subject heard a sequence of digits (for example, 9, 2, 4) and tried to repeat them in reverse (4, 2, 9). For the other task, subjects were asked to tap in reverse order a series of spatial locations on a computer screen. Data were collected from 1975 to 2016 for the digit task and from 1989 to 2016 for the spatial task, comprising over 135,000 participants in 1,754 samples. The results showed a small diminishment in performance across years for both the digit and the spatial tasks (with effect sizes of d = −.06 and d = −.17, respectively†).

What’s the takeaway? It’s clear that discerning whether digital activities compromise attention is a difficult research problem. Based on three different research strategies, we can only conclude that screen time may modestly degrade attention. We don’t have strong causal evidence, and the correlation seems to come and go across studies.

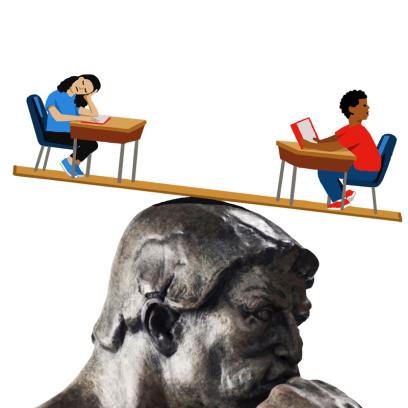

This presents a paradox: Many teachers think that students are much more distractible than they were in the recent past, but research shows an effect that comes and goes and is modest when it is observed. How can we make sense of this contradiction?

Digital Content Changes How We Value Rewards

When kids are distractible, it’s natural to suspect attention is to blame. But maybe the problem is not that they can’t pay attention, but rather that they don’t want to.

For many students, schoolwork is often challenging and not very engaging. One motivator to maintain attention is the promise of some later reward. That might be the satisfaction of understanding the content, the pride of receiving a good grade, or avoiding the disapproval of teachers or family members.

What makes students more or less willing to endure something unpleasant in exchange for an anticipated reward? How much they value the reward, obviously, but also how long they must wait for it. Immediate gratification is appealing because the same payoff seems less valuable if it’s delayed.16

Here’s the way researchers study this phenomenon. Let’s say I offer you a choice: Would you prefer that I give you 10 dollars tomorrow, or 10 dollars a week from tomorrow? Virtually everyone would prefer the money sooner because you have an extra week to enjoy whatever you spend the money on.

Suppose I compensate you for waiting. You can choose between 10 dollars tomorrow or 11 dollars a week from tomorrow. You may figure that one extra dollar does not offer enough incentive to wait a week, and so you’d still pick the 10 dollars tomorrow. I can keep making offers—varying both the delay and the amount of money—to figure out your delay discount rate—that is, how much compensation would induce you to wait to get a reward. Some people hate to wait, and I might need to promise 20 dollars (versus an immediate 10 dollars) to get them to wait a week, whereas others would require only an extra dollar.17

A high delay discount rate is an aspect of impulsivity; a more impulsive person will grab a smaller reward that’s available now, rather than waiting for a larger reward later.18 One standard measure of delay discount rate is obtained by asking participants to answer about 30 questions like the one posed earlier (“Would you prefer 10 dollars tomorrow or 20 dollars in eight days?”19). Importantly, a high delay discount rate is associated with (but may or may not cause‡) failure to finish high school22 and poorer grades among college students.23

It’s possible that the use of digital technologies has changed children’s delay discount rates for the worse because instant gratification is such a prominent characteristic of digital activities. When you’re on a phone or computer, there is little reason to endure boredom because there is always something else you might do on the device. What’s more, accessing that alternate activity is easy—you just keep scrolling, or you switch apps. Perhaps that impulsivity carries over to other activities. Students can pay attention, but if they get bored, they are quick to switch their attention to something else.

Some research supports this possibility. People who show “problematic use” of the internet generally24 or of specific apps like Facebook25 (as identified by self-report measures) show higher discount rates than average users. Of course, we’re interested in more than problematic use. Do we still see a relationship between delay discount rate and digital activity among more typical users? The answer is a tentative “yes.” A few studies show a consistent but still modest relationship (approximately an effect size of d = 0.25) between technology use and delay discount rate. These studies have used different measures of tech engagement, including self-reported screen time,26 actual screen time measured on the device,27 and self-reported time on social media.28 Thus, the observed relationship with delay discount rate is not a quirk of which measure of digital-device use we happened to pick.

So, there is a possible causal chain that using digital devices increases the delay discount rate, and then the higher delay discount rate causes poorer academic performance. But the picture remains incomplete. As before, the available data are largely correlational. And delay discount rates may have not been measured in the best way. That is, researchers typically determine individuals’ rates by asking them to answer questions about money. But when a student diverts their attention to their phone, they aren’t getting a financial reward but rather one of information. If I delay getting a financial reward by a week, the money still has the same objective value, even if I think of it differently. But if a student delays reading a text message or checking social media, the information may lose value. Social information is perishable.

There is limited research on the subject, but one study of college students suggested that the value of money decays over the course of weeks, whereas information in text messages decays in hours.29 Researchers may find that delay discount rates are a stronger predictor of distractibility if they measure discount rates for digital content. For example, one study showed that people’s delay discount rate of the value of texting predicts their likelihood of texting while driving, whereas their delay discount rate for a monetary reward does not.30

Digital Content Changes Boredom Calculations

Perhaps the same content that was interesting enough to hold the attention of students a generation ago might be deemed boring by today’s kids. It’s easy to dismiss this account as an example of generational bias. Don’t older people always think that they, as children, were superior to today’s youth? But psychological theories of boredom suggest there may be more to it than that.

Contemporary theories of boredom emphasize its function.31 Boredom alerts us that it’s time to change activities because we judge that whatever we’re doing now has less value than something else we might do. Theories vary in what they propose goes into our calculation of “value.” For example, one theory suggests that we feel bored when we detect a mismatch between the current activity and our valued goals,32 and another proposes that we experience boredom when we sense that we aren’t learning anything.33

However “value” is calculated by the mind, if boredom’s function is to prompt a change to a more fruitful activity, that implies the existence of a mental mechanism to calculate opportunity costs. The tediousness of the current task is based not only on the characteristics of the task, but also on an unconscious comparison with what else you might be doing. Thus, you feel more bored when another available activity is deemed more valuable than the current one.34

That sensitivity to context seems plausible, or even likely. For example, consider a student who finds a novel interesting when she’s on an airplane and has forgotten to bring her phone. Might she not deem the same novel less interesting if she has her phone with her? She’s bored because she (unconsciously) compares the interest of the novel to that of watching YouTube videos.

Some research supports this proposal. In one experiment, subjects were led to a small room where they were told to sit for 15 minutes and “entertain themselves with their thoughts.”35 For half the subjects, the room was barren, containing only a desk, chair, filing cabinet, and chalkboard without chalk. For the other subjects, the room contained a laptop with an open web browser, a partially completed Lego puzzle, a partially completed jigsaw puzzle, sheets of paper with crayons, and chalk for the chalkboard. Participants in this room were instructed not to interact with the objects, but to just sit with their thoughts.

At the end of 15 minutes, subjects rated their experience on several scales, and the people who were in the room that held interesting activities reported significantly more boredom than the people who were in the empty room.

Compared to students a generation ago, students today may feel bored more often because they may nearly always find themselves in environments filled with fun activities they are not doing. In other words, students may unconsciously compare whatever they are doing to the fun activities available on the phones in their pockets.§

Other correlational data are consistent with this idea. Much research shows a correlation between cellphone use and boredom:36 People who report that they are frequently bored also report using their cellphones a lot. It’s possible that causation moves in the other direction—that people who are easily bored turn to their phones more often to relieve their boredom. But there are also data showing that people feel more bored37 and more distracted38after using their cellphones, perhaps because having recently used the phone is a reminder of how engaging digital activities are. Although only about a third of teens say they enjoy social media “a lot” and nearly two-thirds say they enjoy online videos “a lot,”39 enjoyment only needs to be intermittent for it to create a powerful pull. People don’t become compulsive gamblers because they always win, but rather because they sometimes win; many researchers have compared the thrill a teen gets from an occasional successful moment on social media to the occasional jackpot for a gambling addict.40

Implications

How might the results reviewed here influence the thinking of parents, educators, and policymakers when it comes to children and digital activities?

The first conclusion is both cheering and dispiriting. Digital devices do not seem to degrade students’ capacity to pay attention, which is clearly good news. But at the same time, it’s useful to know the nature of your enemy. Despite a paucity of hard data on the matter, I believe the overwhelming majority of teachers who say that it’s more difficult to get students to stay on task than it was a generation ago. How are we supposed to address the problem if we don’t know what’s causing it?

I’ve offered two alternative explanations for what teachers have observed. Each is a variation on this idea: It’s not that students can’t pay attention, but rather that they more readily choose not to. The delay discounting story suggests that experience with digital devices makes students set a higher value to near-term rewards (e.g., watching a funny video) and a lower value to rewards they anticipate getting in the distant future (e.g., being well prepared for college or an apprenticeship). The boredom explanation suggests that digital devices prompt students to more readily conclude they are bored because all nondigital activities are unconsciously compared to entertainment on their phone, and the phone always seems more attractive.

I’ve reviewed data supporting each hypothesis, but there’s insufficient research to convince us that either—or both—play a substantial role in the observed change in children’s behavior. Still, we should probably hope these explanations are valid, because both suggest that the degradation of attention has been learned. And what is learned can potentially be unlearned.

If digital devices make students overvalue near-term rewards, perhaps children can be coaxed to reassess the importance of long-term rewards by making them more explicit or salient. For example, portfolios of student work might help students see and appreciate how much progress they have made in the quality of their work throughout a school year and reflect on the necessity of hard work to access the satisfaction that progress brings.

If digital devices prompt students to set a low threshold for concluding “this is boring,” it may be that, with the consistent absence of digital devices, the unconscious mind will learn that the phone is unavailable in a particular context, and the calculation of boredom will adjust accordingly. We might hope that cellphone bans in school would induce such learning, and one would predict that students would learn it more quickly with bell-to-bell bans (rather than allowing phones between classes and at lunch and recess) because they would develop a consistent association between school and no phone. Indeed, schools and districts could help test this hypothesis by gathering relevant data as bans are implemented (and perhaps changed or rescinded over time).

We should keep in mind that children’s use of digital devices may have consequences across a variety of outcomes, touching on their mental and physical health,41 social-emotional skills,42 and academic performance.43 This article has reviewed data on just one cognitive outcome, namely, attention. In addition, it has focused on long-term consequences. The short-term consequences of digital device use are well known: They frequently distract users from other tasks.44 Clearly, any recommendations for children’s use of digital devices must take a broad view of likely outcomes.

That said, attention is the nexus of thought; it is essential for all of the cognitive processes we want students to develop, such as reading deeply, solving problems, and thinking creatively. If students’ use of digital devices is degrading their ability to deploy attention effectively—and teachers are veritably screaming that it is—that phenomenon should be near the top of our priority list for education research and policy.

Daniel T. Willingham is a professor of cognitive psychology at the University of Virginia. He is the author of several books, including the bestseller Why Don’t Students Like School? and Outsmart Your Brain: Why Learning Is Hard and How You Can Make It Easy. Readers can pose questions to “Ask the Cognitive Scientist” by sending an email to ae@aft.org. Future columns will try to address readers’ questions. This article is adapted with permission from “Pay Attention, Kid! Has the Use of Digital Technology Impaired Students’ Ability to Focus?,” which Willingham published in Education Next in September 2025 and is available at go.aft.org/1p3.

*For a detailed look at this issue, see “Beyond Excerpts: Teaching with Whole Books Boosts Comprehension and Engagement.” (return to article)

†To give these effect sizes more meaning, here’s an example of how they are measured: Imagine two rooms, each containing 100 adult men. The average height of the men in the two rooms differs slightly: 69.6 inches in one room and 69.1 inches in the other, although there’s plenty of variation, with tall and short men in each room. The mean height of adult men in the United States is about 68.9 inches (almost 5′9″),15 with a standard deviation of roughly 2.9 inches. To calculate effect size, you divide the difference between the two rooms—0.5 inches—by the standard deviation of 2.9 inches. That yields an effect size of about d = 0.17. Yet, if you saw these two groups of men side by side, do you think you’d notice the difference in height between the groups? (return to article)

‡For some tasks, social environment may interact with discount rate. For example, in a well-known demonstration, researchers showed that children responded to the famous marshmallow task differently depending on family wealth. Children from disadvantaged homes ate the marshmallow quickly, which can be interpreted as smart, not impulsive, if one lives in an environment of uncertainty.20 But the relationship of impulsivity to life outcomes depends on the task and is often still present when family income is controlled for21—and for some of the relationships, such as drug use or obesity, the argument for rationality doesn’t seem to apply. (return to article)

§For a related “Ask the Cognitive Scientist” article on how to increase engagement and when to use attention grabbers, see “Why Do Students Remember Everything That’s on Television and Forget Everything I Say?” in the Summer 2021 issue of American Educator: go.aft.org/hbu. (return to article)

Endnotes

1. A. Prothero, L. Langreo, and A. Klein, “Which States Ban or Restrict Cellphones in Schools?,” Education Week, November 3, 2025, edweek.org/technology/which-states-ban-or-restrict-cellphones-in-schools/2024/06; and J. Amy, “Majority of US States Now Have Laws Banning or Regulating Cellphones in Schools, with More to Follow,” Associated Press, May 21 2025, apnews.com/article/cellphones-phones-school-ban-states-c6a54feb9d2661e04989b7cdd5b2821b.

2. 2022 National Healthcare Quality and Disparities Report (Agency for Healthcare Research and Quality, US Department of Health and Human Services, October 2022), ahrq.gov/research/findings/nhqrdr/nhqdr22/index.html.

3. J. Hatfield, “72% of U.S. High School Teachers Say Cellphone Distraction Is a Major Problem in the Classroom,” June 12, 2024, pewresearch.org/short-reads/2024/06/12/72-percent-of-us-high-school-teachers-say-cellphone-distraction-is-a-major-problem-in-the-classroom.

4. N. Carr, The Shallows: What the Internet Is Doing to Our Brains (W. W. Norton & Company, 2011).

5. B. Draganski et al., “Changes in Grey Matter Induced by Training,” Nature 427 (2004): 311–12.

6. S. Sawchuk, “How to Build Students’ Reading Stamina,” Education Week, January 15, 2024, edweek.org/teaching-learning/how-to-build-students-reading-stamina/2024/01.

7. See, for example, R. Horowitch, “The Elite College Students Who Can’t Read Books,” The Atlantic, October 1, 2024, theatlantic.com/magazine/archive/2024/11/the-elite-college-students-who-cant-read-books/679945.

8. K. Ioannidis et al., “Cognitive Deficits in Problematic Internet Use: Meta-Analysis of 40 Studies,” British Journal of Psychiatry 215, no. 5 (2019): 639–46; R. Jusienė et al., “Executive Function and Screen‐Based Media Use in Preschool Children,” Infant and Child Development 29, no. 1 (2020): e2173; and N. Lakicevic et al., “Screen Time Exposure and Executive Functions in Preschool Children,” Scientific Reports 15, no. 1 (2025): 1839.

9. C. Fitzpatrick et al., “Associations Between Preschooler Screen Time Trajectories and Executive Function,” Academic Pediatrics 25, no. 2 (2025): 1–7; N. Gueron-Sela and A. Gordon-Hacker, “Longitudinal Links Between Media Use and Focused Attention Through Toddlerhood: A Cumulative Risk Approach,” Frontiers in Psychology 11 (November 1, 2020); J. Kim and M. Tsethlikai, “Longitudinal Relations of Screen Time Duration and Content with Executive Function Difficulties in South Korean Children,” Journal of Children and Media 18, no. 3 (2024): 386–404; J. McNeill et al., “Longitudinal Associations of Electronic Application Use and Media Program Viewing with Cognitive and Psychosocial Development in Preschoolers,” Academic Pediatrics 19, no. 5 (2019): 520–28; L. Salmerón et al., “Did Screen Reading Steal Children’s Focus? Longitudinal Associations Between Reading Habits, Selective Attention and Text Comprehension,” Journal of Research in Reading 48, no. 2 (2025): 175–98; and B. Xiao et al., “Examining Self-Regulation and Problematic Smartphone Use in Canadian Adolescents: A Parallel Latent Growth Modeling Approach,” Journal of Youth and Adolescence 54, no. 2 (2025): 468–79.

10. A. Gordon-Hacker and N. Gueron-Sela, “Maternal Use of Media to Regulate Child Distress: A Double-Edged Sword? Longitudinal Links to Toddlers’ Negative Emotionality,” Cyberpsychology, Behavior, and Social Networking 23, no. 6 (2020): 400–405.

11. A. Kılıç et al., “Exposure to and Use of Mobile Devices in Children Aged 1–60 Months,” European Journal of Pediatrics 178, no. 2 (2018): 221–27.

12. M. Bal et al., “Examining the Relationship Between Language Development, Executive Function, and Screen Time: A Systematic Review,” PLOS ONE 19, no. 12 (2024): e0314540.

13. D. Andrzejewski, E. Zeilinger, and J. Pietschnig, “Is There a Flynn Effect for Attention? Cross-Temporal Meta-Analytical Evidence for Better Test Performance (1990–2021),” Personality and Individual Differences 216 (January 2024): 112417.

14. P. Wongupparaj et al., “The Flynn Effect for Verbal and Visuospatial Short-Term and Working Memory: A Cross-Temporal Meta-Analysis,” Intelligence 64 (September 2017): 71–80.

15. C. Fryar et al., Anthropometric Reference Data for Children and Adults: United States, August 2021–August 2023 (National Center for Health Statistics, Centers for Disease Control and Prevention, US Department of Health and Human Services, June 2025), 9, table 8, cdc.gov/nchs/data/series/sr_03/sr03-050.pdf.

16. L. Green, A. Fry, and J. Myerson, “Discounting of Delayed Rewards: A Life-Span Comparison,” Psychological Science 5, no. 1 (1994): 33–36.

17. R. Thaler, “Some Empirical Evidence on Dynamic Inconsistency,” Economics Letters 8, no. 3 (1981): 201–7.

18. G. Ainslie, “Specious Reward: A Behavioral Theory of Impulsiveness and Impulse Control,” Psychological Bulletin 82, no. 4 (1975): 463–96.

19. K. Kirby, N. Petry, and W. Bickel, “Heroin Addicts Have Higher Discount Rates for Delayed Rewards Than Non-Drug-Using Controls,” Journal of Experimental Psychology: General 128, no. 1 (1999): 78–87.

20. C. Duran and D. Grissmer, “Choosing Immediate Over Delayed Gratification Correlates with Better School-Related Outcomes in a Sample of Children of Color from Low-Income Families,” Developmental Psychology 56, no. 6 (2020): 1107–20.

21. T. Moffitt et al., “A Gradient of Childhood Self-Control Predicts Health, Wealth, and Public Safety,” Proceedings of the National Academy of Sciences 108, no. 7 (2011): 2693–98.

22. M. Castillo, J. Jordan, and R. Petrie, “Discount Rates of Children and High School Graduation,” Economic Journal 129, no. 619 (2019): 1153–81.

23. G. Winston, K. Kirby, and M. Santiesteban, “Impatience and Grades: Delay-Discount Rates Correlate Negatively with College GPA,” Williams Project on the Economics of Higher Education, Williams College, 2002, ideas.repec.org/p/wil/wilehe/63.html.

24. Y. Cheng et al., “The Relationship Between Delay Discounting and Internet Addiction: A Systematic Review and Meta-Analysis,” Addictive Behaviors 114 (March 2021): 106751.

25. D. Delaney, L. Stein, and R. Gruber, “Facebook Addiction and Impulsive Decision-Making,” Addiction Research and Theory 26, no. 6 (2018): 478–86.

26. H. Wilmer and J. Chein, “Mobile Technology Habits: Patterns of Association Among Device Usage, Intertemporal Preference, Impulse Control, and Reward Sensitivity,” Psychonomic Bulletin & Review 23, no. 5 (2016): 1607–14.

27. T. Endert and P. Mohr, “Likes and Impulsivity: Investigating the Relationship Between Actual Smartphone Use and Delay Discounting,” PLOS ONE 15, no. 11 (2020): e0241383.

28. D. Pan et al., “Time on Social Networking Sites Is Associated with Impulsive Decision-Making,” Behaviour and Information Technology 44, no. 9 (2025): 1760–65.

29. P. Atchley and A. Warden, “The Need of Young Adults to Text Now: Using Delay Discounting to Assess Informational Choice,” Journal of Applied Research in Memory and Cognition 1, no. 4 (2012): 229–34.

30. Y. Hayashi et al., “A Behavioral Economic Analysis of Texting While Driving: Delay Discounting Processes,” Accident Analysis & Prevention 97 (December 2016): 132–40.

31. S. Bench and H. Lench, “On the Function of Boredom,” Behavioral Sciences 3, no. 3 (2013): 459–72.

32. E. Westgate and T. Wilson, “Boring Thoughts and Bored Minds: The MAC Model of Boredom and Cognitive Engagement,” Psychological Review 125, no. 5 (2018): 689–713.

33. M. Agrawal et al., “The Temporal Dynamics of Opportunity Costs: A Normative Account of Cognitive Fatigue and Boredom,” Psychological Review 129, no. 3 (2022): 564–85.

34. Z. Wojtowicz, N. Chater, and G. Loewenstein, “Boredom and Flow: An Opportunity Cost Theory of Motivational Attention,” Social Science Research Network, March 13, 2019, ssrn.com/abstract=3339123.

35. A. Struk et al., “Rich Environments, Dull Experiences: How Environment Can Exacerbate the Effect of Constraint on the Experience of Boredom,” Cognition and Emotion 34, no. 7 (2020): 1517–23.

36. A.-L. Camerini, S. Morlino, and L. Marciano, “Boredom and Digital Media Use: A Systematic Review and Meta-Analysis,” Computers in Human Behavior Reports 11 (August 2023): 100313; and A. Ksinan, J. Mališ, and A. Vazsonyi, “Swiping Away the Moments That Make Up a Dull Day: Narcissism, Boredom, and Compulsive Smartphone Use,” Current Psychology 40, no. 6 (2019): 2917–26.

37. J. Dora et al., “Fatigue, Boredom and Objectively Measured Smartphone Use at Work,” Royal Society Open Science 8, no. 7 (July 2021): 201915.

38. T. Siebers et al., “Social Media and Distraction: An Experience Sampling Study Among Adolescents,” Media Psychology 25, no. 3 (2022): 343–66.

39. V. Rideout et al., The Common Sense Census: Media Use by Tweens and Teens, 2021 (Common Sense, 2022), commonsensemedia.org/sites/default/files/research/report/8-18-census-integrated-report-final-web_0.pdf.

40. R. James and R. Tunney, “The Need for a Behavioural Analysis of Behavioural Addictions,” Clinical Psychology Review 52 (March 2017): 69–76.

41. N. Zeeni et al., “Media, Technology Use, and Attitudes: Associations with Physical and Mental Well-Being in Youth with Implications for Evidence-Based Practice,” Worldviews on Evidence-Based Nursing 15, no. 4 (2018): 304–12.

42. A. Rodman et al., “Within-Person Fluctuations in Objective Smartphone Use and Emotional Processes During Adolescence: An Intensive Longitudinal Study,” Affective Science 5, no. 4 (2024): 332–45.

43. D. Caballero-Julia, J. Martín-Lucas, and L. Andrade-Silva, “Unpacking the Relationship Between Screen Use and Educational Outcomes in Childhood: A Systematic Literature Review,” Computers & Education 215 (July 2024): 105049.

44. Q. Chen and Z. Yan, “Does Multitasking with Mobile Phones Affect Learning? A Review,” Computers in Human Behavior 54 (January 2016): 34–42.

[Illustrations by Paul Zwolak]