Misinformation damages society in a multitude of ways, and we ignore its negative impact at our peril—especially regarding climate change. While studying how misinformation does damage, scientists have also researched and developed approaches to help build the public’s resilience against misinformation. Teachers are in a powerful position to implement these evidence-based strategies—not only playing a pivotal role in building students’ resilience but also providing deeper, more engaging science education that equips students with sorely needed critical-thinking skills. Much of this article is devoted to sharing those strategies so that teachers and students can effectively counter misinformation, but first, let’s explore how misinformation does damage.

How Misinformation Damages Society

The most obvious way that misinformation does damage is by causing people to believe misconceptions or reducing belief in accurate facts. One experiment found that just a handful of cherry-picked statistics about climate change confused people and reduced their acceptance that climate change was happening.1 After being shown the misinformation, they also become less supportive of action to reduce climate change. Other, more subtle impacts of misinformation are also dangerous, such as eroding trust in scientific institutions and scientists. As we’ve seen throughout the COVID-19 pandemic, distrust of health experts has led to less adoption of safe behaviors like mask wearing and vaccination, which endangers both individuals and public health.

The effect of misinformation is not identical across different segments of the public. In research my colleagues and I conducted, we found that misinformation about climate change was strongly persuasive with political conservatives but had little impact on political liberals.2 This means that as misinformation washes over society, it splits the public further apart, exacerbating an already partisan populace. Misinformation polarizes.

Arguably, one of the most insidious aspects of misinformation is its capacity to cancel out accurate information. In an experiment testing the impact of misinformation that cast doubt on the scientific consensus on climate change, participants were shown conflicting pieces of information.3 One group was shown accurate information about the 97 percent agreement among climate scientists that humans are causing climate change. This consensus message had a strong positive effect, increasing public perceptions of consensus and acceptance of global warming. Another group of participants was shown an excerpt from a prominent example of climate misinformation: the Global Warming Petition Project. This misinformation lists over 31,000 signatories of a petition stating that humans aren’t disrupting our climate, arguing that there is no scientific consensus on climate change. As you’d expect, it had a negative impact—reducing people’s climate change perceptions. A third group of participants was shown both the consensus and the misinformation. With this group, fact and myth cancelled each other out. This result has significant consequences for scientists, educators, and climate communicators. It means even if we use well-tested, effective science explanations, our efforts can be cancelled out by misinformation.

When people are presented with conflicting pieces of information and don’t know how to resolve the conflict, the danger is they disengage and therefore fail to learn from the accurate information. Unfortunately, the information landscape is an uneven playing field. Misinformation doesn’t have to be coherent or based on evidence to have an impact. Just by existing, it can cancel out our efforts to communicate accurate facts.

This means that teaching the facts, while necessary, is insufficient. If we fail to equip students with the ability to distinguish between facts and misinformation, we leave them susceptible to being misinformed and our facts vulnerable to being undermined. Fortunately, this dynamic also points to a solution to misinformation. If the problem is that people can’t resolve the conflict between fact and myth, then the answer is to help them resolve that conflict. We achieve this by explaining the misleading techniques that promoters of misinformation use to distort the facts.

Inoculating the Public Against Misinformation

Inoculation theory is a branch of psychological research that offers a framework to help counter misinformation. It takes the principle of vaccination—building resistance to a disease by being exposed to a weak version of the disease—and applies it to knowledge.4 By exposing people to a weakened form of misinformation, they can develop “cognitive antibodies” or “immunity” against the misinformation.

What do I mean by a “weakened form of misinformation”? An inoculating message consists of two elements: warning people of the threat of being misled—which is important in putting people on guard against the danger of misleading persuasion—and then providing counterarguments explaining how the misinformation is wrong. In an extension of the experiment described above with the climate consensus and misinformation, there was an inoculation that explained the different ways that the Global Warming Petition Project was misleading.5 First, it was an online petition with little quality control, resulting in Star Wars characters and Spice Girls appearing on the list of signatories. Second, while 31,000 seems like a large number, it’s a tiny fraction of the millions of Americans with degrees in science. Lastly, while it lists people with all types of science degrees, such as computer scientists, medical scientists, and engineers, less than 1 percent of the signers have expertise in climate science. When participants in the experiment were inoculated before being shown the misinformation, the facts had a positive effect and the misinformation was mostly neutralized. Crucially, this held true for Democrats, Republicans, and Independents; the results for Republicans were especially heartening because other facets of the study indicated that they were, on average, predisposed to believe the misinformation.

Around the same time that this research was happening, my colleagues and I were conducting similar research, also testing how to inoculate people against climate misinformation.6 Coincidentally, we even used the same misinformation: the Global Warming Petition Project. Overall, our results were similar, showing that people at the conservative end of the political spectrum were strongly influenced by misinformation, while people who were politically liberal were relatively unaffected—and also showing that inoculation can be effective. Notably, we used a different inoculation technique. Before being given the misinformation, one group in our study learned about fake experts. This is where a person appeals to their own expertise and yet doesn’t have relevant expertise. Fake experts are frequently deployed to confuse the public and can be highly persuasive. Fortunately, our general inoculation against fake experts completely neutralized the misinformation and, importantly, was effective across the political spectrum. This tells us that whether people are politically conservative or liberal, no one likes being misled.

Facts or Logic? Yes!

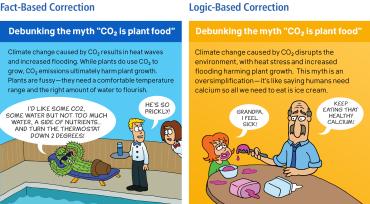

My research has focused on two main types of inoculation: fact based and logic based.7 Fact-based corrections explain how the misinformation is false or misleading. For example, you can show how the myth “we should emit CO2 because it’s good for plants” is misleading by explaining the various factors plants need to flourish, such as a regular water supply and comfortable temperature range. Emitting CO2 causes climate change, which disrupts these conditions.

Logic-based corrections involve explaining the rhetorical techniques or logical fallacies used in misinformation. For example, you can explain how the “CO2 is plant food” myth uses the fallacy of oversimplification. By focusing on a single factor like CO2 fertilization, it ignores other factors that plants need to grow. This myth is like arguing “our bodies need calcium, so all we need to eat is ice cream,” despite the fact that our bodies need a balanced diet.

Both of these approaches are effective.8 Whenever possible, I try to use both in combination—explain the facts, introduce the myth, and then reconcile the conflict between the two by explaining the myth’s fallacy. This fact-myth-fallacy format is the recommended structure for debunking (or prebunking) laid out in The Debunking Handbook 2020 (which is available for free in multiple languages here).

Although factual corrections are often crucial, the logic-based approach offers some unique benefits. Explaining the rhetorical technique used in one topic can help build resistance to the same technique used in a different topic. For example, consider the two inoculations described above for the Global Warming Petition Project. Pointing out that many of the signatories are computer scientists and not climate scientists is a factual correction that applies to the petition. Highlighting that such fake experts are widely used to intentionally mislead puts people on guard for the petition and for other situations in which claims rely on “experts.” In our research, my colleagues and I explained how tobacco companies used the fake expert strategy* to cast doubt on the scientific evidence linking smoking to negative health impacts.9 When people were subsequently shown misinformation about climate change using the same fake expert technique, the misinformation no longer had an impact. Logic-based inoculation conveys immunity across topics—it’s like a universal vaccine against misinformation.†

In a different experiment, my colleagues and I directly compared the logic-based and fact-based approaches in addressing the climate myth that we should emit more carbon dioxide because it’s plant food.10 We also tested whether order mattered when encountering misinformation and corrections. We asked whether debunking—seeing the correction after the misinformation—had a different effect than prebunking—seeing the correction before the misinformation. When people were shown the logic-based correction, it reduced belief in the myth regardless of whether it came as a prebunking or a debunking. Order didn’t matter with the logic-based correction. But with the fact-based correction, order did matter: prebunking did not work. If the fact-based correction was the last thing people were shown, the myth was successfully debunked. But if the misinformation was the last thing people read, the myth cancelled out the facts. This underscores the inherent danger of misinformation and its ability to cancel out our factual explanations—especially since, in the real world, people see a mix of facts and myths regularly.

The bottom line is that communicating both the facts and the rhetorical techniques used to cast doubt on facts is important. In practice, I try to incorporate both. But the unique benefits of logic-based corrections are crucially important; to prevent misinformation from spreading, raising awareness of the logical fallacies and rhetorical techniques used to intentionally mislead is imperative.

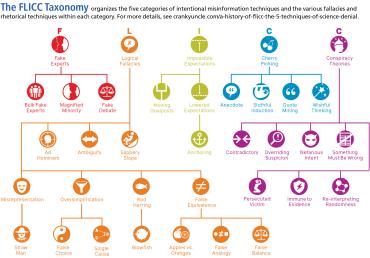

Giving Misinformation the FLICC

In order to explain misleading techniques, it helps to have a vocabulary to describe them. I’ve explored different ways of organizing and explaining misinformation techniques, but I’ve always come back to FLICC: fake experts, logical fallacies, impossible expectations, cherry picking, and conspiracy theories.11 Over the years, I’ve expanded these five categories into an ever-growing taxonomy (below) of rhetorical techniques, logical fallacies, and conspiratorial traits.12

Along with documenting the landscape of misinformation techniques, I’ve also explored approaches to more effectively explain them to the public. One powerful approach is parallel argumentation, which involves transplanting the flawed logic from a fallacious argument into an analogous situation (e.g., I used this strategy above to clarify that just as humans need more than ice cream, plants need more than CO2).13 Parallel arguments have strong pedagogical value, allowing educators to explain abstract logical concepts in concrete terms, typically using examples from everyday life.14 One key benefit—particularly for students who are just beginning to learn science—is that by focusing on errors in reasoning, we can show how misinformation is misleading while sidestepping the need to provide complicated explanations that rely on extensive science knowledge.

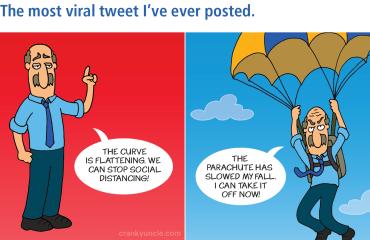

Parallel argumentation is also conducive to attention-grabbing and humorous applications. Through several studies, my colleagues and I have found cartoons with parallel arguments are effective in debunking misinformation about vaccines15 and climate change.16 Using eye-tracking data, we found humorous cartoons successful in discrediting misinformation because people spent more time paying attention to the cartoons than to corrections delivered in other, less engaging ways.17 As a bonus, humorous corrections were more likely to be shared, increasing their chances of going viral. (The most viral tweet I ever posted, reaching several million impressions, was a cartoon debunking COVID-19 misinformation; it is shown below.)

The reality that our factual explanations are vulnerable to misinformation highlights the importance of logic-based corrections. It’s like shielding our factual explanations with protective bubble wrap as we send them out into a cold, hard world. And it turns out that the classroom is the ideal venue for building resilience against misinformation.

Inoculation in the Classroom

How does inoculation work in an educational context? There is a teaching approach known as refutational teaching or agnotology-based learning (I personally refer to it as misconception-based learning). This approach involves teaching science by directly tackling scientific misconceptions. For example, one can explain the carbon cycle by addressing how the phenomenon might be misunderstood. Every year, vast amounts of carbon move through our climate system. In the fall, plants give up billions of metric tons of carbon dioxide into the atmosphere as leaves fall and decompose. In the spring, plants absorb the carbon dioxide back from the air as leaves grow back. This system is balanced, with the amount of carbon absorbed during the spring roughly equal to the amount of carbon released during the fall.

One myth about climate change is that human CO2 emissions don’t matter because they’re tiny compared with natural CO2 emissions. After all, we emit around 30 billion metric tons per year, while nature emits over 700 billion metric tons every year. This myth ignores that nature is roughly in balance, with natural absorptions matching natural emissions.18 Our CO2 emissions disrupt the natural balance, resulting in atmospheric CO2 increasing to levels not seen in millions of years. By directly addressing the myth, we not only address a misconception about the carbon cycle but also deepen our understanding of how human activity has disrupted the natural balance.

Misconception-based learning is one of the most powerful ways of teaching science. It’s been shown to result in higher learning gains, and, importantly, the gains last longer relative to standard lessons.19 Students find misconception-based learning more engaging,20 and it appears to have the curious benefit of instilling appropriate humility. One study compared the effect of standard lessons versus misconception-based lessons, finding that students made stronger learning gains from the misconception-based lessons21—but students who received the standard lessons were more confident of their understanding (despite recording lower learning gains). Unfounded confidence can be a roadblock to learning, and misconception-based learning reduces that barrier.

Unfortunately, there is a dearth of educational resources to help teachers apply misconception-based learning. Over the last decade, I’ve been working on developing resources in a number of different contexts to address this deficit. For high school educators, I collaborated with the National Center for Science Education to develop a curriculum teaching fundamental concepts of climate science while addressing common myths and misconceptions about climate change.‡ For college professors, I coauthored a climate textbook with Weber State University’s Dan Bedford in which each chapter not only explains key concepts regarding climate change but also debunks common myths associated with them.22 And in a more informal learning context, I led a collaboration between the online learning team at the University of Queensland and the team of climate misinformation debunkers at Skeptical Science to develop a massive open online course, “Making Sense of Climate Science Denial.”§ This course features around 50 short videos explaining the facts of climate change as well as debunking related myths and exposing the logical fallacies in each myth.

The purpose of misconception-based learning is to improve students’ science literacy and boost their critical-thinking skills. But in recent years, a new approach to inoculation has emerged that may offer an even more engaging and interactive way to neutralize misinformation.

Gamifying Critical Thinking to Build Resilience

Most examples of inoculation against misinformation are what researchers describe as passive inoculation—one-way communication where the audience passively receives the inoculating message. But an exciting new approach is active inoculation. This involves learning the techniques of misinformation by actively employing them. Active inoculation can be applied in a number of ways, particularly in the classroom; for example, students could do role-playing exercises or purposely attempt to incorporate misleading techniques in their writing.

Among those of us who study inoculation, there’s been an increasing focus on digital games, which are a particularly engaging and scalable approach. Generally speaking, games that are designed to be both fun and educational nown as serious games.23 In the last few years, a growing number of serious games have focused on building resilience against misinformation through active inoculation.

The Bad News game is an early example of this approach, where the goal of the game is to become a fake news merchant. Through the course of the game, players learn about six techniques of fake news, such as emotive posts or impersonating authoritative sources. Preliminary evidence indicates that by the end, players have become somewhat more aware of and resistant to misinformation.24 The Bad News game focuses on media literacy, so that players become better able to assess the reliability of online information sources such as news websites and Twitter accounts. New games adapting the Bad News game template have also focused on misinformation undermining democracy25 and COVID-19 misinformation.26

While media literacy is an important skill for students to develop, critical thinking is a broad umbrella. As well as assessing media sources, students also need to be able to assess arguments, whether on social media, on mainstream media, or in conversation. Over the last few years, I’ve been working with Autonomy Co-op to develop a game that teaches players how to spot misleading rhetorical techniques—the kinds of fallacious arguments we might hear from our cranky uncle.

Getting Cranky to Stop Misinformation

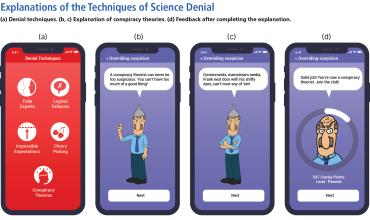

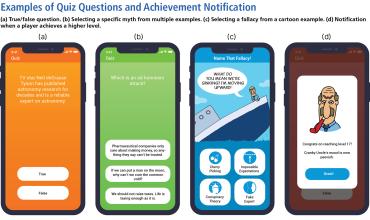

The Cranky Uncle game (available for free at crankyuncle.com/game) is designed to build resilience against the techniques of science denial. The player’s goal is to become a science-denying “cranky uncle” by learning about a range of misleading rhetorical techniques used to reject the conclusions of the scientific community. By adopting the mindset of a cranky uncle—inspired by the active inoculation approach—players develop a deeper understanding of science denial techniques. Although I intend to conduct several more studies to determine the effectiveness of the game and improve it, preliminary results show increases in critical thinking. Ultimately, the intent is to inoculate players against misleading persuasion attempts in the future.

The game consists of two elements. First, Cranky Uncle explains the techniques of science denial. This includes the five categories of FLICC as well as many of the fallacies and techniques found in the FLICC taxonomy. But this brings us to a fundamental psychological challenge when trying to build resilience against misinformation. Critical thinking is hard! Our brains are hardwired to make fast, snap decisions rather than slowly reason through problems.**

However, there is a way to make critical thinking faster and less difficult: mastering expert heuristics. When a person practices a difficult task over and over, the slow thinking processes required to complete the difficult task gradually evolve into fast thinking responses. For example, consider the difference between teenagers who are learning to drive—struggling to signal a turn, check the rear view, and lightly press the break just to go around the block—and an adult who hops in the car and runs errands virtually on autopilot. With enough practice, even very complex mental tasks can become easy. This is where games offer a potential solution to building resilience against misinformation.

The second feature of the Cranky Uncle game is quizzes in which players try to spot fallacies in examples of misinformation. Their purpose is to use gameplay elements such as collecting points and moving to higher levels to motivate players to practice critical thinking, over and over again. The more quizzes completed, the quicker and easier it gets to spot common patterns in deceptive arguments. For example, the false-choice fallacy, otherwise known as a false dichotomy, is widely used in misinformation. The tell-tale red flag for this argument is being presented with an either-or choice. When you see that form of argument, consider whether other options might be available besides the two presented (or perhaps both options could be true at the same time).

The game approach does have some limitations. For example, spotting the technique of cherry picking can be difficult if you don’t have enough relevant background knowledge. But even in this case, the game helps players spot one common form of cherry picking known as anecdotal thinking. This powerful form of misinformation uses compelling narratives and can be quite persuasive. But if players learn how to spot the use of single examples (i.e., anecdotes) as evidence for an argument, they likely will become less vulnerable to being misled by that form of cherry picking.

The danger of serious games is players can lose interest in playing the game if they see it as no fun and all education. Fun is one of the main factors determining whether players are willing to play a game again.27 By featuring an ornery cartoon character as the player’s mentor guiding them through the game, as well as humorous examples of logical fallacies in the quizzes, I hope this pitfall is avoided for most youth playing Cranky Uncle. In the Cranky Uncle game, humor is an integral part of the learning process, with parallel arguments in cartoon form providing not only humor but also instructive illustrations of fallacious logic. This makes the game an engaging tool for the classroom, afterschool clubs, and summer programs.

By October 2021, just 10 months since the Cranky Uncle game was released, educators have signed up to use the game in over 38 U.S. states (as well as 16 other countries)—in both red and blue states—which gives me hope that the game is reaching communities across the political spectrum. Further, the game is being adopted in a range of subjects as diverse as biology, environmental science, English, media studies, and philosophy. This demonstrates the growing conviction that critical thinking is needed in any subject where misinformation can be found (i.e., all subjects). The other intriguing element of the game is to see it being adopted in classes from middle school to graduate school (it’s even in a few elementary classes). My colleagues and I are in the process of collecting data to assess the game’s effectiveness at different levels.

In the classroom, an activity that seems beneficial after students have played the game several times is to role-play. When giving guest lectures in college classes on climate change, I have played the cranky uncle while the professor tries to convince me of climate change. After this demonstration, we divide the class into small groups and the students conduct their own role-play exercises. Afterward, students often discuss how much easier it is to be the cranky uncle and how difficult it is to respond to fallacious arguments in real time.

Misinformation is an immense societal problem—ever present and ubiquitous. This necessitates solutions that can reach significant proportions of the population at a scale commensurate with the problem. But further, misinformation is complex and interconnected—with cultural, psychological, and technological factors. This complexity means we need holistic solutions. I’m convinced we need interdisciplinary solutions that combine science, technology, and the arts. Science provides evidence-based approaches to addressing misinformation, such as logic-based inoculation. Art can help package educational material in engaging, memorable formats. Technology allows the development of games that are both interactive and scalable.

The Cranky Uncle game brings together these diverse threads, synthesizing research on inoculation, critical thinking, and science humor, wrapped in a technological package that is accessible and interactive. But there is no magic bullet that will neutralize the misinformation problem. This is a multi-front struggle, requiring public campaigns and technical solutions implemented in collaboration with social media platforms. Nevertheless, educators are in a unique and powerful position in the fight against misinformation.

John Cook is a postdoctoral research fellow with the Monash Climate Change Communication Research Hub at Monash University in Australia; he researches how to use critical thinking to build resilience against misinformation. In 2020, he published the book Cranky Uncle vs. Climate Change and the Cranky Uncle game; both combine critical thinking and cartoons to build resilience against misinformation (visit crankyuncle.com). Cook also founded Skeptical Science, an award-winning website that debunks climate myths. Among his many papers and books are two coauthored college textbooks, Climate Change: Examining the Facts and Climate Change Science: A Modern Synthesis, and two books to address climate misinformation, The Debunking Handbook 2020 and The Conspiracy Theory Handbook.

*To learn more about how tobacco companies have intentionally misled the public, see “Mercenary Science: A Field Guide to Recognizing Scientific Disinformation.” (return to article)

†My colleauges and I have not yet been able to conduct studies to determine how long this incoluation may last. Generally, the research shows inoculation effects fade over time, so “booster shots” are required; it also shows logic-based inoculations last longer than factual explanations. (return to article)

‡To download this free curriculum, visit here. (return to article)

§To take this free course, visit here. (return to article)

**To learn about how our minds work and why critical thinking requires so much effort, see “Why Don’t Students Like School? Because the Mind Is Not Designed for Thinking” in the Summer 2009 issue of American Educator. (return to article)

Endnotes

1. M. Ranney and D. Clark, “Climate Change Conceptual Change: Scientific Information Can Transform Attitudes,” Topics in Cognitive Science 8, no. 1 (2016): 49–75.

2. J. Cook, S. Lewandowsky, and U. Ecker, “Neutralizing Misinformation Through Inoculation: Exposing Misleading Argumentation Techniques Reduces Their Influence,” PLOS ONE 12 (2017): e0175799.

3. S. van der Linden et al., “Inoculating the Public Against Misinformation About Climate Change,” Global Challenges 1, no. 2 (2017): 1600008.

4. J. Compton, B. Jackson, and J. Dimmock, “Persuading Others to Avoid Persuasion: Inoculation Theory and Resistant Health Attitudes,” Frontiers in Psychology 7 (2016): 122.

5. Van der Linden et al., “Inoculating the Public.”

6. Cook, Lewandowsky, and Ecker, “Neutralizing Misinformation.”

7. J. Banas and G. Miller, “Inducing Resistance to Conspiracy Theory Propaganda: Testing Inoculation and Metainoculation Strategies,” Human Communication Research 39, no. 2 (2013): 184–207.

8. P. Schmid and C. Betsch, “Effective Strategies for Rebutting Science Denialism in Public Discussions,” Nature Human Behaviour 3 (2019): 931–39.

9. Cook, Lewandowsky, and Ecker, “Neutralizing Misinformation.”

10. E. Vraga et al., “Testing the Effectiveness of Correction Placement and Type on Instagram,” International Journal of Press/Politics 25, no. 4 (2020): 632–52.

11. M. Hoofnagle, “Hello Scienceblogs,” Denialism Blog, April 30, 2007, scienceblogs.com/denialism/about.

12. J. Cook, “Deconstructing Climate Science Denial,” in Edward Elgar Research Handbook in Communicating Climate Change, ed. D. Holmes and L. Richardson (Cheltenham, UK: Edward Elgar Publishing, 2020).

13. J. Cook, P. Ellerton, and D. Kinkead, “Deconstructing Climate Misinformation to Identify Reasoning Errors,” Environmental Research Letters 13, no. 2 (2018): 024018.

14. A. Juthe, “Refutation by Parallel Argument,” Argumentation 23, no. 2 (2009): 133–69.

15. S. Kim, E. Vraga, and J. Cook, “An Eye Tracking Approach to Understanding Misinformation and Correction Strategies on Social Media: The Mediating Role of Attention and Credibility to Reduce HPV Vaccine Misperceptions,” Health Communication (July 7, 2020).

16. Vraga et al., “Testing the Effectiveness.”

17. E. Vraga, S. Kim, and J. Cook, “Testing Logic-Based and Humor-Based Corrections for Science, Health, and Political Misinformation on Social Media,” Journal of Broadcasting & Electronic Media 63, no. 13 (2019): 393–414.

18. G. Wayne, “How Do Human CO2 Emissions Compare to Natural CO2 Emissions,” Skeptical Science, skepticalscience.com/human-co2-smaller-than-natural-emissions.htm.

19. J. McCuin, K. Hayhoe, and D. Hayhoe, “Comparing the Effects of Traditional vs. Misconceptions-Based Instruction on Student Understanding of the Greenhouse Effect,” Journal of Geoscience Education 62, no. 3 (2014): 445–59.

20. L. Mason, M. Gava, and A. Boldrin, “On Warm Conceptual Change: The Interplay of Text, Epistemological Beliefs, and Topic Interest,” Journal of Educational Psychology 100, no. 2 (2008): 291.

21. D. Muller and M. Sharma, “Tackling Misconceptions in Introductory Physics Using Multimedia Presentations,” in Proceedings of the Australian Conference on Science and Mathematics Education 58 (2007).

22. D. Bedford and J. Cook, Climate Change: Examining the Facts (Santa Barbara, CA: ABC-Clio, 2016).

23. C. Girard, J. Ecalle, and A. Magnan, “Serious Games as New Educational Tools: How Effective Are They? A Meta-Analysis of Recent Studies,” Journal of Computer Assisted Learning 29 (2013): 207–19.

24. J. Roozenbeek and S. van der Linden, “The Fake News Game: Actively Inoculating Against the Risk of Misinformation,” Journal of Risk Research 22, no. 5 (2019): 1–11; and J. Roozenbeek and S. van der Linden, “Fake News Game Confers Psychological Resistance Against Online Misinformation,” Palgrave Communications 5, no. 1 (2019): 12. To play Bad News, visit getbadnews.com.

25. J. Roozenbeek and S. van der Linden, “Breaking Harmony Square: A Game That ‘Inoculates’ Against Political Misinformation,” Harvard Kennedy School Misinformation Review 1, no. 8 (2020). To play Harmony Square, visit harmonysquare.game/en.

26. M. Basol et al., “Towards Psychological Herd Immunity: Cross-Cultural Evidence for Two Prebunking Interventions Against COVID-19 Misinformation,” Big Data & Society 8, no. 1 (2021). To play Go Viral!, visit goviralgame.com/books/go-viral.

27. A. Imbellone, B. Botte, and C. Medaglia, “Serious Games for Mobile Devices: The InTouch Project Case Study,” International Journal of Serious Games 2, no. 1 (2015).

[Illustrations courtesy of John Cook]